Classroom AI Conversations with Guardrails, Structure, and Teacher Confidence

One of the questions teachers ask most often about classroom AI is not “Can it chat?” but “Can I trust it enough to use it with students?” That is exactly the problem our AI Chat app was built to solve.

The goal of AI Chat is not to hand students an open-ended chatbot and hope for the best. The goal is to give teachers a way to use AI conversation as an instructional tool inside a structured classroom environment, with clear prompts, strong boundaries, and teacher-facing oversight.

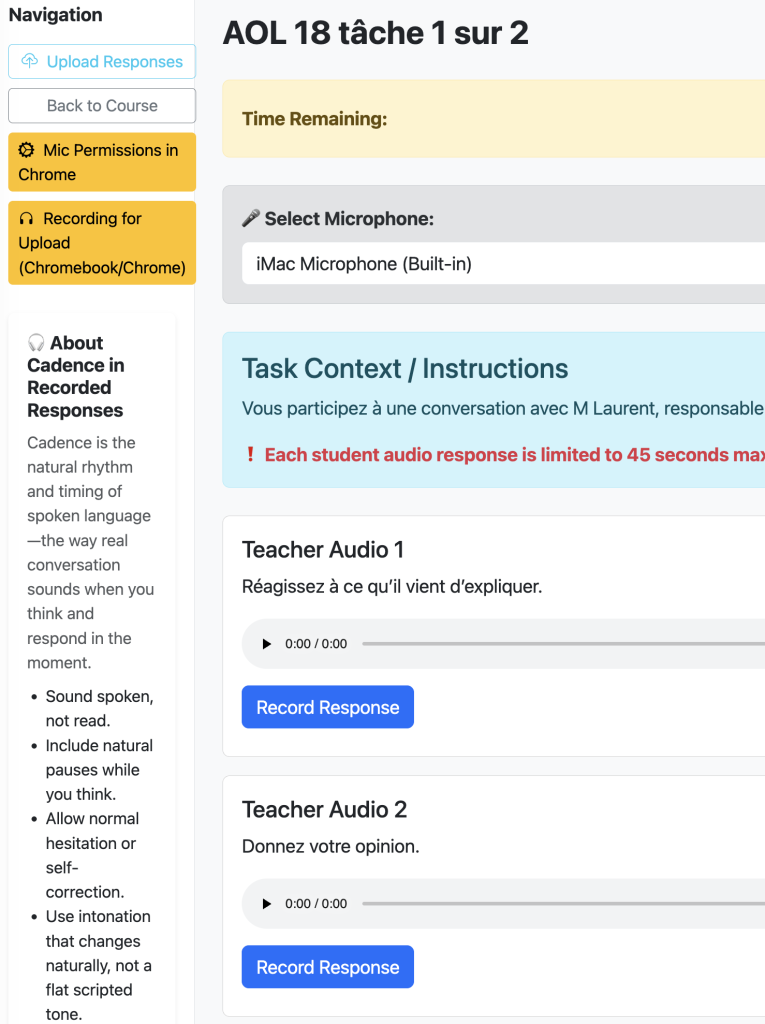

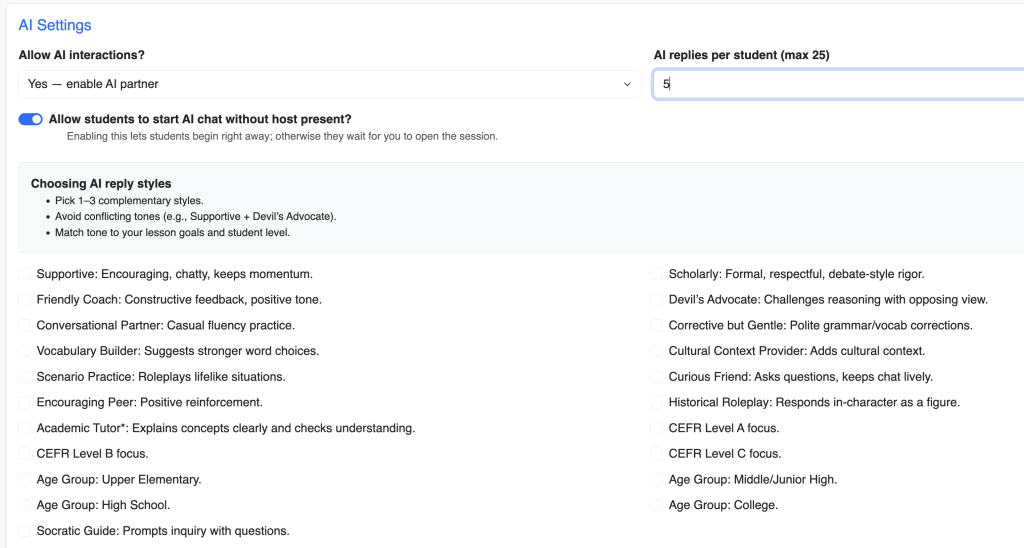

The teacher begins by designing the experience. Instead of sending students into a blank AI space, the teacher sets the context for the chat lesson. That can include the topic, the role the AI should play, the style of interaction, and the kind of responses students should practice. In other words, the teacher is not losing control of the lesson. The teacher is shaping it. The AI becomes part of the instructional design, not a replacement for it.

That design layer matters because it changes the tone of classroom AI use completely. A good AI classroom tool should not start with “Ask anything.” It should start with “Here is the conversation space, the purpose, the boundaries, and the learning goal.” AI Chat does that by grounding the experience in teacher-authored prompts and lesson framing.

Safety and guardrails are where confidence really begins. In a classroom setting, teachers need to know that the AI interaction is not just interesting but manageable. AI Chat is built with that in mind. The interaction is task-based, teacher-directed, and contained inside the app’s lesson structure. That means students are not wandering through a general consumer AI environment. They are participating in a bounded academic conversation designed for class use.

Students do not need “more AI.” They need a clear task, a safe place to respond, and a sense of what the conversation is supposed to accomplish.

Another confidence point is that AI Chat is not just about what students see. It is also about what teachers can supervise. Classroom AI becomes much more usable when teachers know there is visibility into the work. A safe AI lesson is not only about preventing bad outcomes; it is also about preserving teacher awareness. If a tool gives structure without visibility, teachers still hesitate. AI Chat is designed to keep the instructional frame intact so the AI supports the lesson rather than taking it over.

The prompt layer is especially important here. Teachers can shape the AI to behave more like a tutor, conversation partner, role-play partner, or guided practice engine depending on the activity. That means a teacher can create targeted uses for AI instead of generic ones. In one lesson, the AI might support language practice. In another, it might guide historical role-play. In another, it might help students think through an argument or reflect on a reading. The key point is that the teacher defines the academic purpose first.

That structure also helps address one of the biggest concerns around classroom AI: unpredictability. Teachers are much more likely to use AI confidently when they know the task is framed, the expectations are clear, and the AI’s role is intentionally constrained. AI Chat supports that by centering the prompt design and lesson purpose rather than offering unrestricted exploration as the default.

There is also a practical classroom benefit to this kind of design: it reduces the intimidation factor for both students and teachers. Students do not need “more AI.” They need a clear task, a safe place to respond, and a sense of what the conversation is supposed to accomplish. Many teachers feel the same way. AI Chat makes classroom use feel more like a guided lesson and less like opening the door to an unknown system.

This approach promotes confidence without pretending AI needs no supervision. It respects the reality that teachers want innovation, but they also want boundaries. They want students to interact with AI, but not in a way that feels chaotic, untraceable, or disconnected from the lesson. AI Chat works because it treats safety, prompt design, and teacher control as core features, not optional extras.

In short, AI Chat is built to help teachers bring AI into the classroom with more confidence. It combines instructional prompting, structured interaction, and classroom-minded guardrails so teachers can use AI as part of a lesson without feeling like they are surrendering the lesson to the tool.