When schools evaluate AI tools, the first question should be simple: what student data is actually sent to the AI model?

For AI-assisted scoring in Innovation Assessments, our design goal is data minimization. The scoring request is built from the instructional context needed for evaluation, not from a student profile.

For example, an AI-assisted scoring request may include:

- The assignment prompt

- The rubric or teacher scoring guidance

- The student response text

- The question text and model answers, when relevant for short-answer scoring

It does not need to include separate student profile fields such as:

- Student name

- Email address

- Roster metadata

That distinction matters. A scoring model needs the work being scored and the teacher’s scoring context. It does not need a student’s identity in order to suggest a score or generate rubric-aligned feedback.

Our Design Principle: Data Minimization

We believe privacy claims should be specific. Rather than making vague statements about “secure AI,” we focus on a narrower and more verifiable principle: only send the minimum data required for the scoring task.

In practice, that means our AI-assisted scoring flow is designed so the model receives the assignment context and the response content, without separate student identity fields attached to the request.

What This Means in Plain English

If a teacher uses AI-assisted scoring, the AI is evaluating the response itself, not a named student record.

That said, there is an important limitation, and we want to state it clearly: if a student includes identifying information inside the body of the response, that text may still be part of the scoring request, because it is part of the submitted work. In other words, we minimize identity data at the system level, but we do not claim that every student response is automatically fully anonymized in all cases.

That is why we avoid exaggerated claims. “Anonymous” is often too broad. “Minimized and identity-stripped at the profile-field level” is more accurate.

Why Trust Requires More Than Marketing

We do not think schools should trust privacy language just because it sounds reassuring. Trust comes from precision, consistency, and a willingness to describe limits.

A credible privacy statement should answer four questions:

- What data is sent?

- What data is not sent?

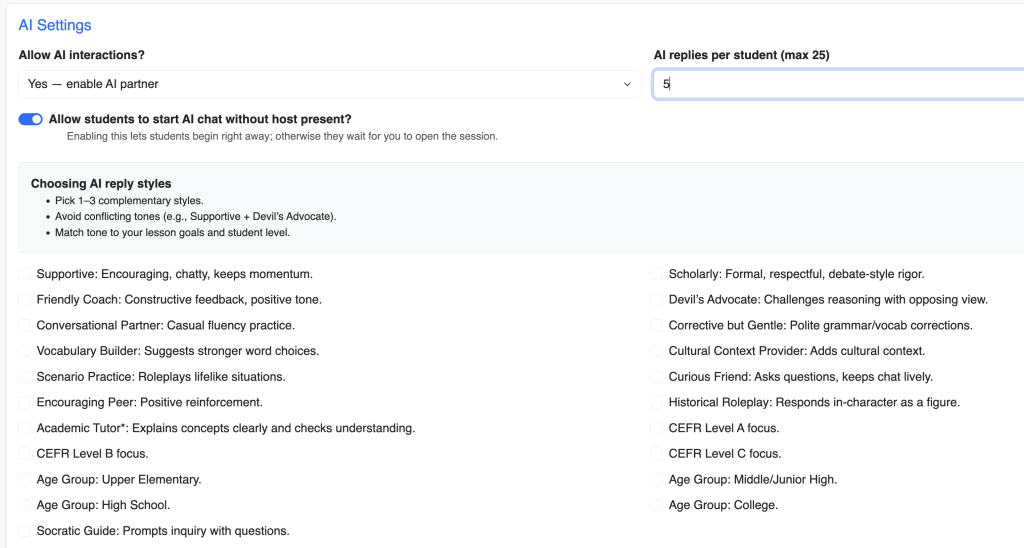

- Who can trigger the AI workflow?

- What are the known limitations?

Our goal is to answer those questions directly, in plain language, rather than hide behind general marketing phrases.

Our Commitment

We will continue to design AI features around necessity, not convenience. If a piece of student identity data is not required for the scoring task, it should not be part of the AI request.

That is the standard we think schools should expect from any education platform using AI.