I’ll be the first to admit that I have always been fascinated by new technology and computer innovation. But I know not everyone shares my enthusiasm. I have come across a number of friends and acquaintances who do not share my welcome of AI or enthusiasm for its potential.

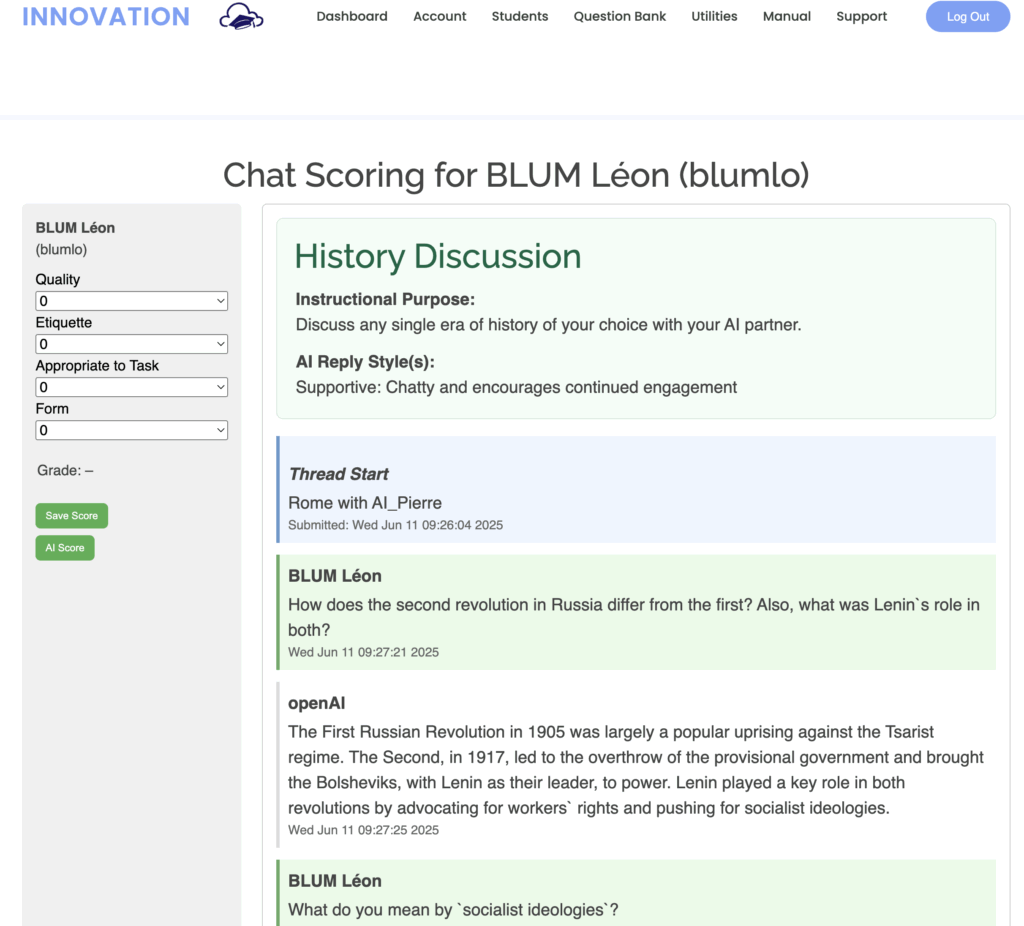

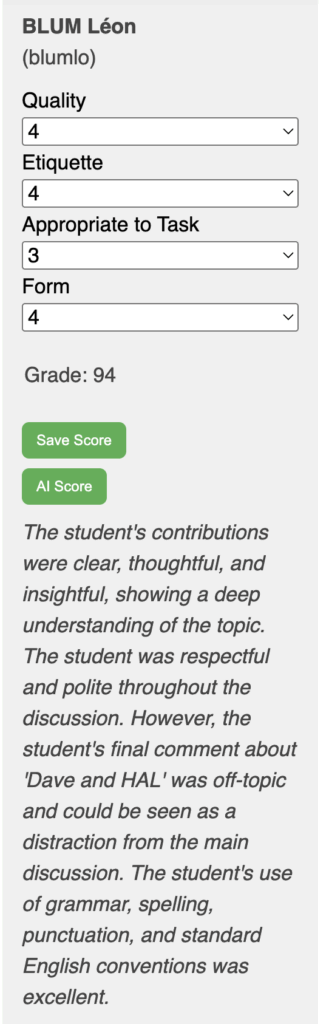

A number of valid criticisms emerge from articles on AI, especially in education. Student abuse is a primary concern. This is worse than the old days where some students had an older sibling who wrote some of their papers for them. AI assistants are freely accessed on multiple devices and are experts in every field. Students who use them to do assignments for school are doing a “cognitive offloading” that means they may not learn what is intended. Honestly, I believe that there is a huge crop of new graduates who are getting away with this in this time period during which educational practices are trying to catch up. Can firms really trust any diploma granted between 2022 and whenever? I teach online and solving this problem has occupied an enormous amount of my prep time.

The natural resources necessary to run AI are enormous. In typical insensitive capitalist fashion, in some places large tech firms are sucking in vast amounts of electricity and water to support AI data centers at the expense of their neighbors and the environment.

I have seen articles from students who are angry that their teachers are using AI to grade their work or that the powerpoint and class materials were AI-generated.

But I am still not scared.

Socrates lamented the invention of writing because students who become literate will fail to develop their memory. (Plato, Phaedrus 274c-275b).

In the 15th century, an Italian scribe Filippo de Strata railed against the new printing press technology. He said it was a threat to the artistry of manuscripts and potential for moral corruption (Latin address to Doge Nicolò Marcello, written between 1473 and 1474). So too was Abbot Johannes Trithemius worried it would foster idleness among monks and diminish the spiritual benefits of hand-copying (In Praise of Scribes).

When I was in middle school in the late 1970s, handheld calculators just became kind of affordable (my first one, a birthday gift, was over $100!). Older people said this was cheating and that kids would lose the ability to do math in their heads. (Okay, so for those of us already bad at math, this was either prescient or irrelevant.)

In the year before I retired, I had a parent conference with a mom and her kids who had been homeschooled up to then. She spent a lot of time extolling the virtues of good penmanship that she had instilled in her children and chided the schools for no longer teaching cursive. I smiled and nodded whilst thinking that once outside the family foyer these kids will never pen anything longer than their signature.

I know, this is a good place for an eye-roll emoji. The reader may feel that an accusation of Luddism is hyperbole. But I think the analogy is apt. New technologies call into question old practices and it is tempting to hold on to them because we have already mastered them, because we have already invested so much in mastering them, because we value them for that reason. Instructors who have been teaching for years have their favorite assignments and projects that worked for years and now are unworkable because students will have an AI do it. They will have to let those go.

My experience last semester teaching AP French for an online school offered me many lessons in this very thing. When I took over the course from another instructor whose schedule had changed, I discovered that the students had been using translators for all of their work and not being challenged on it. I made it my mission to thwart AI abuse in that course (see related blog posts), so I truly sympathize with the reader who thinks AI will destroy education.

But I’m still not scared of AI.

Like virtually all other technologies that radically transform, the transformation period is stressful. Where is this going? How will this affect me? My job?

This technological transformation is coming, like it or not. Like writing, printing, calculating, and similar “cognitive offloading” tools, this will come because it is too good to too many people for it to be just dropped. At first, because it’s new, there will be an adjustment period. But society will adapt and evolve and rebalance because that is where our interest lies.

I don’t think AI is scary. I think AI is powerful.

And like any powerful tool, what matters is who wields it, and how.